The Surveillance in Your Child's Backpack: What School-Issued Devices Are Really Doing

How school Chromebooks and iPads became one of the most pervasive — and least-discussed — surveillance systems in American life, and what parents, educators, and policymakers are doing about it.

When a parent in California discovers that a school IT administrator watched her seventh-grade daughter stream an anime episode at home — and emailed her within minutes — most people's first reaction is disbelief. When they learn this is not unusual, that reaction turns to alarm.

Across the United States, 88% of schools now provide students with personal devices, most commonly Google Chromebooks or Apple iPads. What began as an equity initiative — closing the digital divide by giving every child access to a computer — has quietly evolved into something far more complex: a pervasive, always-on surveillance infrastructure that follows students home, into bedrooms, and across their most private moments.

This article examines the full scope of school device surveillance, the companies profiting from it, the documented harms, and the growing parent rebellion demanding something different.

The Scale of the Problem

The numbers are staggering. GoGuardian, one of the dominant players in the student surveillance market, monitors approximately 27 million students across 11,500 schools nationwide. Gaggle Safety Management tracks the online activity of roughly 6 million students in 1,500 districts. Bark, Securly, Lightspeed — these companies together constitute a multi-billion-dollar industry built on the premise that children must be watched at all times on school-issued devices.

According to the Center for Democracy & Technology, 81% of teachers report that their school districts use some form of student activity monitoring software. Perhaps more alarming: only one in four say that monitoring is limited to school hours. That means the majority of schools are actively tracking students in their homes, during evenings, and on weekends.

"Students have no expectation of confidentiality or privacy with respect to any usage of a Chromebook, regardless of whether that use is for school-related or personal purposes." — Actual language from a 2025–2026 school district Chromebook policy

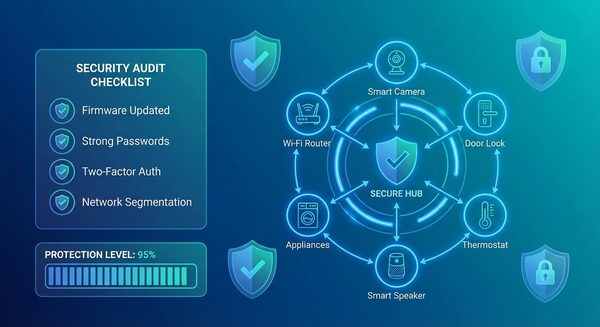

The technical capabilities of these systems go well beyond simple web filtering. School administrators using tools like GoGuardian have access to students' browsing histories, documents, videos, app and extension data, keystroke logs, location data, live screen views, and in some cases, webcam footage — all in real time. Some monitoring software operates both when devices are connected to the internet and when they are offline, queuing up collected data for upload when a connection is restored.

A Cybersecurity Perspective: What's Actually Being Collected

From a security standpoint, what schools have built — often inadvertently — is a remarkably comprehensive data collection apparatus. Consider what a single Chromebook can expose:

- Full browsing history across all sessions, tagged to a student's permanent school identity

- Search queries, including health-related, personal, and sensitive searches

- Location data tied to the device's IP address and GPS (where enabled)

- All keystrokes logged through monitoring extensions

- Screenshots taken at intervals or in real time

- Document contents from Google Drive and other cloud services

- Communications including email drafts, chat logs, and assignment submissions

- App usage patterns and installed extensions

This data is not only collected by the school — it flows to third-party vendors whose data retention policies, security practices, and business models are rarely disclosed to parents. The Electronic Frontier Foundation (EFF), which has conducted extensive investigations into edtech privacy, found that educational technology services routinely collect far more information on children than is necessary and store this information indefinitely, often without adequate security safeguards.

The cybersecurity risk is compounded by the fact that many school districts are chronically underfunded and understaffed. A data breach at a school district doesn't just expose adults who have legal recourse — it exposes minors, often for years or decades of their lives, through permanent records tied to their real identities.

In 2021, a U.S. Senate investigation by Senators Markey and Warren found that edtech surveillance platforms were operating without adequate safeguards, potentially compounding racial disparities in school discipline, and draining resources from more effective student support systems.

The Surveillance Companies: Who They Are and How They Operate

GoGuardian

GoGuardian is perhaps the most widely deployed student surveillance platform in the United States. The company bills itself as a tool to "keep students safe" while enabling personalized learning. In practice, it gives teachers and administrators a live stream of every student's screen, complete browsing history, and an AI-powered flagging system that generates alerts when students visit sites containing certain keywords.

The EFF's investigation of GoGuardian found that its false positive rate is staggeringly high — students have been flagged for visiting the official Marine Corps fitness guide, reading about the cast of Shark Tank, researching the Holocaust, looking up LGBTQ+ health information, and accessing classic literature including Romeo and Juliet. The EFF built a public tool called the "Red Flag Machine" to demonstrate just how absurd the flagging system is, derived from real GoGuardian data.

Beyond inaccurate flagging, GoGuardian's Beacon feature monitors for mental health signals — and has, in documented cases, alerted school officials to students' private struggles in ways that caused further harm rather than support.

Gaggle Safety Management

Gaggle takes monitoring a step further by scanning students' emails, documents, and online communications 24 hours a day using AI. When its algorithm detects potential indicators of self-harm, bullying, or violence, it sends a screenshot to human reviewers employed by Gaggle, who then decide whether to alert the school.

A 2025 investigation by the Christian Science Monitor and Fortune revealed deeply troubling outcomes from Gaggle's deployment in Vancouver, Washington's public schools. A gay student who had written privately about struggles with homophobic parents had his communications flagged — and was subsequently outed to his family by school officials acting on a Gaggle alert. A 13-year-old transgender student was reported after reflecting on a past suicide attempt and subsequent therapy in a school writing assignment. School officials "freaked out" about what the student described as a nonexistent crisis, retraumatizing him in the process.

A 2023 RAND study found only "scant evidence" that AI surveillance tools measurably lower student suicide rates or reduce school violence. Meanwhile, the financial incentive cuts the other way: Gaggle also sells online counseling services.

The 'Pay for Privacy' Divide

Civil liberties organizations including the EFF have highlighted a troubling equity dimension to school device surveillance. The students most likely to rely exclusively on school-issued devices — because their families cannot afford personal laptops — are disproportionately lower-income students and students of color. This creates what the EFF calls a "pay for privacy" scheme: wealthier students can avoid surveillance by using personal devices for sensitive activities, while economically disadvantaged students have no such escape.

The Center for Democracy & Technology has documented that historically marginalized groups of students face compounding disadvantages — both fewer educational opportunities and greater surveillance — through the very programs ostensibly designed to help them.

The Parent Rebellion

Across the country, parents have begun to push back — and they are organizing with remarkable sophistication.

In Los Angeles, Lila Byock watched her son's math grades collapse from A's to D's and F's after his school issued him a mandatory iPad. When she began connecting with other parents, she heard variations of the same story: kids watching YouTube during class, playing Fortnite during school hours, getting sucked into online forums, and in one heartbreaking case, a child running away from home with his school-issued iPad after being drawn into contact with strangers online.

Byock founded Schools Beyond Screens, which now has chapters at 20 schools in the Los Angeles area and has been pressuring the LA Unified School District — the second-largest in the country, serving over 409,000 students — to pull back on mandatory screen time. The coalition's organizing has reached a fever pitch, with around 300 parents attending district listening sessions.

In the Conejo Valley Unified School District, Julie Frumin fought her district to opt her children out of school-issued Chromebooks entirely, citing headaches, distraction, and concerns about AI chatbots being integrated into educational software. Initially told opting out was only allowed for standardized testing and sexual health content, she persisted — and ultimately succeeded. Her son was "beaming" when she told him his laptop was being taken away. Both children now do their work by hand, and both say they are happier.

Emily Cherkin, a former teacher who testified before Congress about screen time in education, created a publicly available opt-out toolkit that has been downloaded over 3,000 times. It includes research on digital learning efficacy, email templates for approaching administrators, and suggested questions for school officials.

"For me, opting out is not the end goal — it's the means to the end. It forces a conversation. It gives permission to other parents to even just start asking questions." — Emily Cherkin, former teacher and education technology researcher

What the Research Actually Shows

The educational justification for widespread device adoption in schools has always rested on an assumption: that more technology produces better learning outcomes. The research record tells a more complicated story.

Studies examining academic performance have found that students who used computers extensively at school performed worse on key metrics. Information retention is measurably better when read on paper than on screens. The i-Ready adaptive learning platform, mandated by districts across California, operates largely as a black box — parents and teachers cannot see what questions students are being asked because the company considers them proprietary intellectual property.

Faith Boninger, a researcher at the University of Colorado Boulder's National Education Policy Center, argues that the entire premise of mandatory edtech adoption is flawed: "Students don't need to be consumers of this technology in order to be able to use it in 10 or 15 years, when it's likely going to be something else entirely."

Meanwhile, the behavioral consequences documented by parents are significant. A first-grade boy in North Hollywood wet himself four times in one month after being required to complete one hour of mandatory iPad time per day for i-Ready assignments — he simply couldn't pull himself away from the device to use the restroom. The incidents stopped when his teacher agreed to limit his iPad time to 20 minutes.

One LA Unified parent put the issue starkly at a district listening session: "You're basically giving them the cocaine, and then you're telling the teachers that they have to figure out how to get it out of the kids' hands."

The Legal Landscape

Federal law provides some protections for student data. The Family Educational Rights and Privacy Act (FERPA) restricts schools from sharing student identifiers with third parties without written parental consent. The Children's Online Privacy Protection Act (COPPA) limits data collection on children under 13. The Children's Internet Protection Act (CIPA) actually requires schools to filter harmful content as a condition of receiving federal broadband funding — which is part of why districts cite legal obligation when deploying monitoring software.

But the gap between legal protections on paper and the reality of surveillance in practice is wide. The EFF found that parents frequently receive no written disclosure about edtech data collection practices. A survey found that 57% of parents were sure they had received no such disclosure, and another 23% weren't sure either way — meaning roughly 80% of surveyed parents lacked clear information about what was being collected on their children.

In May 2025, the Student Privacy Pledge — a voluntary industry commitment signed by hundreds of vendors — was quietly retired. Its sunset reflects a growing consensus that self-regulation in this space has failed, and that meaningful protection requires enforceable policy. The FTC updated its COPPA rules in April 2025 to restrict long-term student data retention and require explicit opt-in consent for targeted advertising.

The EFF has also taken the issue to federal court. In a November 2025 amicus brief in Merrill v. Marana Unified School District, the organization argued that schools cannot claim that a student's use of a school-issued Chromebook makes all their speech — even speech conducted at home, off-hours, in a deleted email draft — automatically subject to school authority and punishment. The brief argued that such a rule would "incentivize public schools to continue eroding student privacy by subjecting them to near constant digital surveillance," and would disproportionately harm lower-income students who have no alternative device to use.

A Cybersecurity Professional's View: What Should Change

From a security and privacy standpoint, the current approach to student devices represents a series of systemic failures that would not be tolerated in most enterprise environments.

Data Minimization. Schools should only collect data necessary for a specific, disclosed purpose. The current model — comprehensive, indefinite collection across every dimension of student activity — inverts this principle entirely. School districts should conduct regular data audits and delete information that is no longer needed.

Transparency and Informed Consent. Parents and students should receive clear, plain-language disclosure of what data is collected, by whom, for how long, and under what circumstances it may be shared. This disclosure should happen before device deployment, not after. Families who decline should have access to non-digital alternatives.

Scope Limitation. Monitoring should be limited to school hours and school networks. The Boulder Valley School District's approach — using GoGuardian only during school hours, with active surveillance features disabled at home — provides a workable model. Content filtering can continue off-hours for safety purposes, but live screen monitoring, keystroke logging, and data collection should stop when the school day ends.

Vendor Accountability. School districts should apply the same vendor risk management standards to edtech companies that any responsible enterprise applies to its technology vendors. This means security assessments, contractual data handling requirements, breach notification obligations, and regular third-party audits. Districts should be particularly cautious about vendors whose business model depends on monetizing student data.

False Positive Accountability. Any surveillance system generating the volume of false positives documented in GoGuardian and Gaggle deployments would be considered broken in a professional security context. Schools should demand accuracy metrics and hold vendors accountable for the documented harms their inaccurate flagging causes — including the outing of LGBTQ+ students, the mischaracterization of minority students as threatening, and the psychological harm of being falsely flagged as a safety risk.

Equitable Opt-Out. Students who opt out of school-issued devices should have access to equivalent educational opportunities. The current situation — where opting out requires navigating bureaucratic resistance and often results in teachers printing out assignments manually — is neither scalable nor equitable. Districts should develop formal analog learning pathways that are not treated as punishments or anomalies.

Conclusion: The Education We're Actually Providing

There is a profound irony in the way schools have deployed surveillance technology in the name of student safety and educational equity. The students most in need of protection — those from lower-income families who cannot afford an alternative device — are the most surveilled. The students ostensibly being prepared for a digital future are being taught, through lived experience, that privacy does not exist, that authority always watches, and that every word they type may be read by someone they never chose to trust.

The EFF's Jason Kelley put it directly: "With surveillance-specific apps like GoGuardian and Gaggle, students are being taught that they have no right to privacy and that they can always be monitored." That is a lesson with consequences that will extend far beyond the classroom.

The parent rebellion underway — from Los Angeles to Seattle to Conejo Valley — is not anti-technology. Most of these parents understand that their children will live and work in a digital world. Their objection is more specific: that mandatory, 24-hour surveillance of children on devices they have no choice but to use is not preparation for that world. It is practice for a different kind of world entirely.

The question for school administrators, policymakers, and technology vendors is not whether to use technology in education. It is whether the surveillance infrastructure that has been built in the name of education is consistent with the values of privacy, dignity, and trust that education is supposed to instill.